Voice

Build outbound AI calls with Python and OpenAI Realtime

Build outbound AI calls with Python, Telnyx Media Streaming, and OpenAI Realtime. Includes Call Control, WebSocket bridge, and PCMU audio.

Most voice AI demos start with an inbound call. A customer calls your number, your app answers, and the AI assistant starts talking.

Outbound calling is a little different. Your app starts the call, waits for the person to answer, and then connects the live phone audio to an AI model. That pattern is useful for appointment reminders, lead qualification, customer follow-up, and other outbound voice AI workflows.

In this tutorial, you will build that flow with Telnyx Voice API, Telnyx Media Streaming, Python, and the OpenAI Realtime API.

By the end, you will have a small FastAPI app that:

- Places an outbound phone call through Telnyx.

- Streams the callee's live audio to your Python server over a WebSocket.

- Sends the audio to OpenAI Realtime.

- Streams the model's spoken response back into the Telnyx call.

If you are still choosing a voice AI architecture, start with our broader guide to voice AI for developers. If you already know you need a code-first outbound call flow, this tutorial gives you the implementation path.

You do not need SIP for this tutorial. SIP is only needed when you are connecting Telnyx to SIP infrastructure such as a PBX, SIP trunk, SIP URI, or contact center platform. For this build, you need a Telnyx Call Control application, an outbound voice profile, a Telnyx number, and a public WebSocket URL for your Python app.

If you want Telnyx to host the voice agent instead of managing the OpenAI Realtime WebSocket yourself, use Telnyx AI Assistants. This guide is for developers who want direct control over the Realtime session and media bridge.

What you will build

The app has three routes:

POST /callplaces an outbound Telnyx call.POST /webhooks/telnyxreceives Telnyx call status events.WebSocket /media-streambridges audio between Telnyx and OpenAI Realtime.

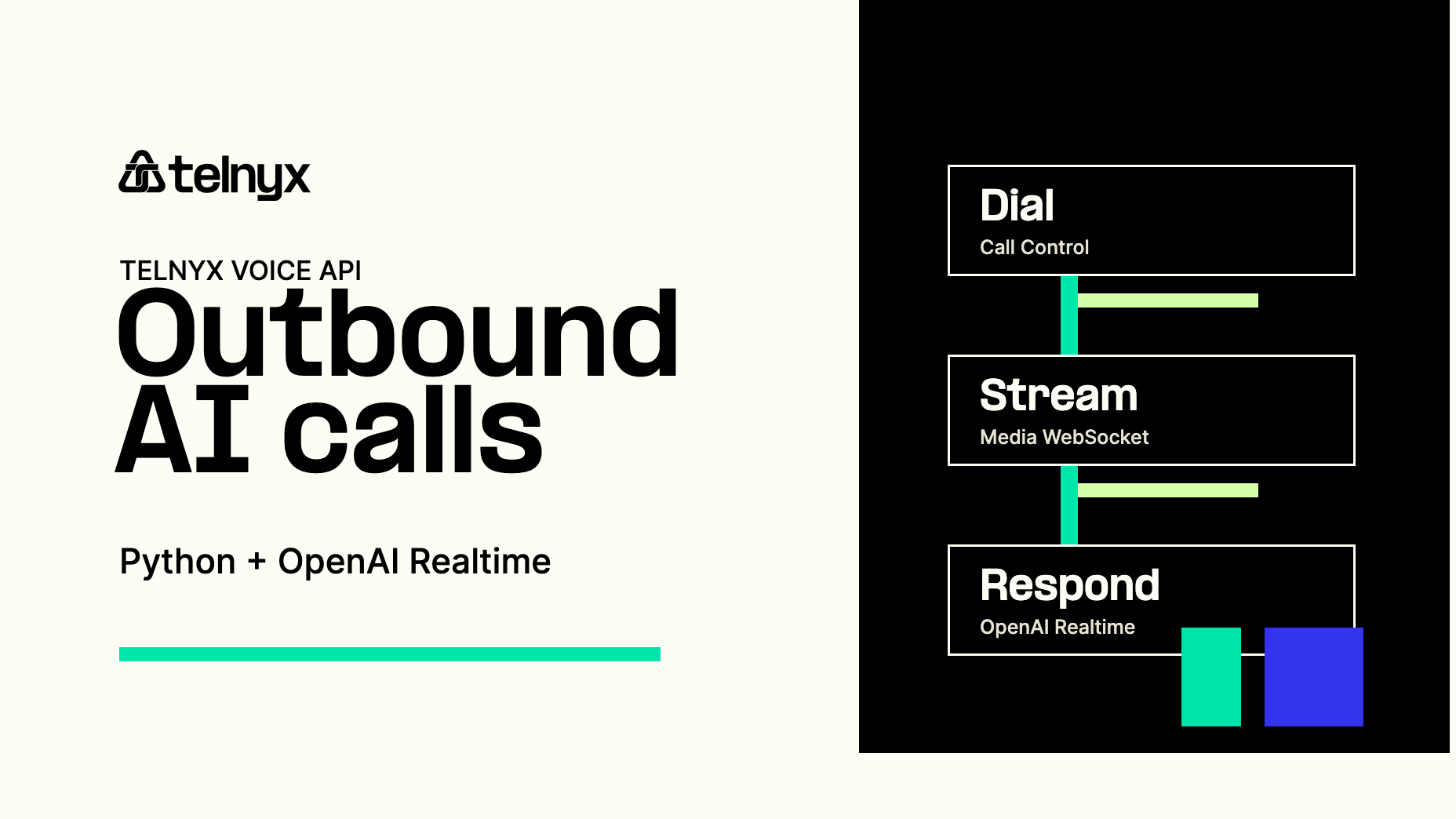

At a high level, the audio path looks like this:

The important detail is that your app owns the bridge. Telnyx handles the outbound call and live media stream. OpenAI Realtime handles the spoken response. Your FastAPI server passes audio between the two.

When to use this approach

Use this approach when you want your own application to manage the OpenAI Realtime session directly. It is a good fit for outbound AI calling when you need custom prompts, custom tool calls, your own call state, or direct control over the audio bridge.

Use Telnyx AI Assistants when you want Telnyx to host the voice agent runtime for you. Use custom LLM support for Telnyx AI Assistants when you want the Telnyx voice agent stack but still want to route inference to an OpenAI-compatible endpoint.

For more background on the WebSocket media layer used in this tutorial, read our guide to building real-time voice AI solutions using WebSockets.

Prerequisites

Before you start, make sure you have:

- A Telnyx account.

- A Telnyx API key.

- A Telnyx number with voice capabilities.

- A Telnyx Call Control application.

- An outbound voice profile connected to that Call Control application.

- An OpenAI API key with access to Realtime.

- Python 3.10 or later.

- A public HTTPS URL for local testing, such as ngrok or Cloudflare Tunnel.

You will also need the ID of your Telnyx Call Control application. In the Calls API, this value is called connection_id.

In the Telnyx Portal, configure the Call Control application's webhook URL to point at your public webhook endpoint:

The sample also passes webhook_url when it creates the outbound call. Setting the application webhook in the Portal gives you a clear default for all call events.

Create the project

Start with a new project folder.

Add the Python dependencies in requirements.txt.

Install the dependencies.

Create an .env file for your credentials and local tunnel URL.

Use E.164 format for TELNYX_FROM_NUMBER, for example +18005550101.

Build the FastAPI server

Now create server.py.

Run the server locally

Open a public tunnel to your local server before starting the app.

Copy the HTTPS forwarding URL into .env as PUBLIC_BASE_URL.

For example:

Then start the FastAPI server.

Place the outbound call

Place a test call by sending a request to your local server.

Telnyx places the outbound call from TELNYX_FROM_NUMBER to the to number. When the person answers, Telnyx opens a WebSocket connection to /media-stream, sends the call audio to your app, and accepts the audio your app sends back.

How the code works

The /call route creates the outbound call with POST /v2/calls. These fields do the main work:

connection_id, the ID of your Call Control application.to, the destination phone number.from, the Telnyx number used as caller ID.stream_url, the WebSocket URL Telnyx connects to for live media.stream_codec, set toPCMUso inbound audio sent to OpenAI uses the same codec OpenAI expects.stream_bidirectional_mode, set tortpso the app can send audio back into the call.stream_bidirectional_codec, set toPCMU.stream_bidirectional_sampling_rate, set to8000.send_silence_when_idle, set totrueso Telnyx generates silence RTP packets while your app is not sending audio back.

The /media-stream WebSocket receives Telnyx stream events. A start event tells you the media stream is ready. media events contain base64 encoded audio payloads. The app forwards those payloads to OpenAI Realtime with input_audio_buffer.append.

OpenAI Realtime sends the model's spoken output as base64 audio deltas. The current GA event name is response.output_audio.delta. Some older OpenAI beta examples and the older Telnyx demo repo use response.audio.delta, so the sample accepts both. New builds should treat response.output_audio.delta as the main event.

Why this uses PCMU

Phone calls usually use telephony codecs, not browser microphone audio. In this example, Telnyx streams PCMU audio at an 8 kHz sampling rate. OpenAI Realtime supports audio/pcmu for audio input and output, so the app can pass base64 audio between Telnyx and OpenAI without doing its own transcoding.

The stream_codec and stream_bidirectional_codec fields are both set to PCMU on purpose. Some regions and carriers may negotiate PCMA by default. If inbound audio is PCMA but OpenAI is configured for PCMU, the model will receive distorted audio. Setting stream_codec to PCMU keeps the inbound stream aligned with the OpenAI session configuration.

The current Telnyx Dial API reference lists stream_codec as a supported POST /v2/calls field. If you are using an older SDK or an account configuration where that field is not accepted on Dial, start the call without any stream fields in the Dial request. That means removing stream_url, stream_track, stream_codec, stream_bidirectional_mode, stream_bidirectional_codec, stream_bidirectional_sampling_rate, and send_silence_when_idle from /v2/calls. Then wait for the call.answered webhook and call the streaming_start command with stream_url, stream_codec, and the bidirectional stream fields. The streaming_start endpoint also supports stream_codec.

That codec choice is why the OpenAI session update uses:

OpenAI's current Realtime API uses this nested session shape with audio.input.format and audio.output.format. Older beta examples use flat fields such as input_audio_format: "g711_ulaw" and the OpenAI-Beta: realtime=v1 header. This tutorial uses the current GA shape.

Telnyx also supports L16 for media streaming. If your model or audio pipeline expects linear PCM, L16 can be a better fit than PCMU. For this OpenAI Realtime bridge, PCMU keeps the sample simple because the Telnyx RTP payload and OpenAI audio/pcmu session format line up directly.

How this maps from Twilio to Telnyx

If you are coming from the Twilio tutorial, the Telnyx version uses Call Control for outbound call creation and Media Streaming for live audio. The shape of the app is similar: create the call, open a media WebSocket, send caller audio to OpenAI Realtime, and send the model's audio back into the call.

The main Telnyx pieces are:

POST /v2/callsinstead of creating the outbound call through Twilio Programmable Voice.- Telnyx Media Streaming instead of Twilio Media Streams.

- Call Control webhooks for call status events.

- A FastAPI WebSocket route that bridges Telnyx media frames to OpenAI Realtime events.

You do not need SIP for this tutorial. SIP is only for connecting Telnyx to SIP infrastructure such as a PBX, SBC, SIP trunk, or SIP endpoint.

You also do not need TeXML. Use TeXML when you prefer XML call instructions or want TwiML-style call control. Telnyx supports media streaming with the <Stream> verb, so you can build the same OpenAI bridge pattern with TeXML, but Call Control keeps the Python example direct.

When to use Telnyx AI Assistants instead

Use Telnyx AI Assistants when you want Telnyx to host the voice agent flow for you. In that path, Telnyx handles the conversational voice stack and you do not need to maintain the OpenAI Realtime WebSocket bridge yourself.

This tutorial is the right fit when you want your own app to manage prompts, call state, tool calls, and the OpenAI Realtime session. AI Assistants are the simpler fit when you want the voice agent runtime managed for you.

Before testing this with real callers, make sure the workflow fits the calling rules for your use case and destination country. Confirm consent, calling hours, AI disclosure, webhook signature validation, rate limits, logging, and a human handoff path before moving past internal test numbers.

That gives you the direct-code path: Telnyx places the outbound call, Media Streaming carries the live audio, and OpenAI Realtime generates the spoken response. If you want the same voice experience without owning the WebSocket bridge, use Telnyx AI Assistants instead.

Share on Social