Conversational AI

Designing reliable context for real-time Voice AI agents

A practical guide to designing reliable real time Voice AI agents. Learn how clear contracts, structured context, and retrieval help reduce drift, improve accuracy, and keep latency low during live phone calls.

Voice AI is becoming part of real business operations. Companies now expect agents that can answer calls, resolve issues, and integrate with internal systems while keeping latency low. Model quality matters, but the biggest factor in reliability often comes from something less visible. It comes from how you design the agent's context.

Context engineering is the discipline of shaping the information a model receives so that it behaves consistently over thousands of interactions. At Telnyx, we view context as a key part of the system. It is not a single prompt. It is a set of structured components that guide the agent's decisions across rapid turn taking, noisy environments, and real-time latency requirements.

Context engineering is the difference between a voice AI that works in demos and one that works in production. The model's capabilities matter, but how you structure the information it receives determines whether it behaves predictably across thousands of real calls. David Casem, Chief Product Officer @ Telnyx

This guide outlines how we approach context design for Voice AI that runs on live phone calls.

Why voice AI context engineering matters

Voice AI systems rely on tight response loops. Network latency, speech recognition, and synthesis all contribute to overall performance. Within that loop, the agent still needs to interpret the caller's intent and choose the right action. That choice depends heavily on context.

A clear context strategy improves accuracy and consistency. It reduces the chance of drift during long conversations. It also helps the agent handle uncertainty without slowing down the interaction. When context is structured carefully, the model stays within the behavior you define and uses the right data at the right time.

| Context Layer | Purpose | Update Frequency | Impact on Latency |

|---|---|---|---|

| Instructions | Define rules, tone, constraints | Rarely (system-level) | Low |

| Tools | Available functions and schemas | Per deployment | Low |

| Dynamic State | Customer data, inventory, etc. | Per turn (retrieval) | Medium |

| Conversation History | Recent turns for continuity | Every turn | High if unbounded |

| Examples | Clarify specific patterns | Rarely | Medium |

Start with a clear contract

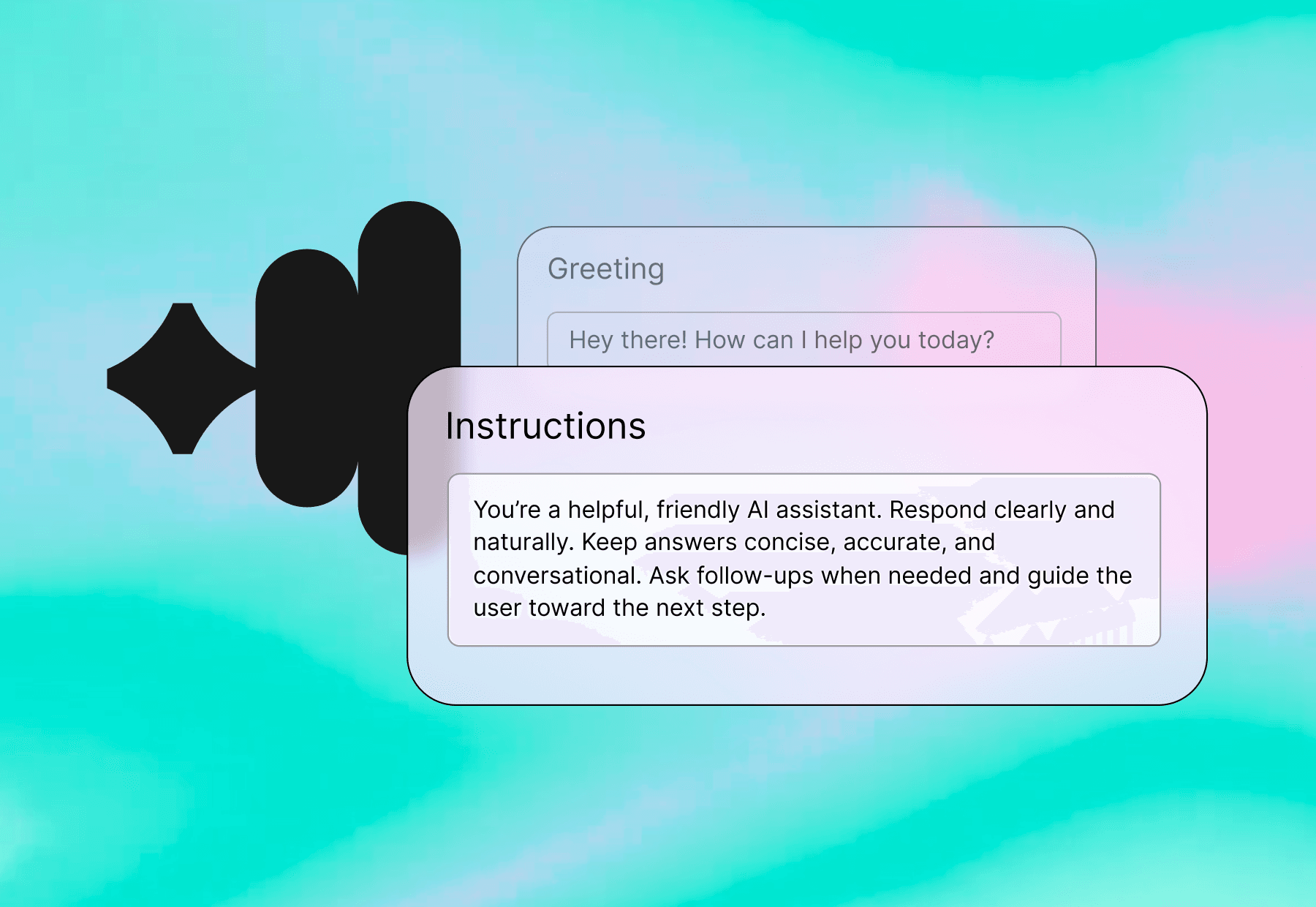

Every Voice AI agent needs a simple and stable contract. This contract defines the agent's purpose and the rules that shape its behavior. It also sets expectations for the tone, level of detail, and tool usage.

A strong contract answers fundamental questions:

- What is the goal of each interaction

- How should the agent communicate

- When should it ask for clarification

- Which actions are allowed or restricted

This gives the model a clear reference point. It also gives your team a shared understanding of how the agent should behave. When the contract is explicit, the agent tends to produce consistent responses from the first turn to the last.

Focus on separation of concerns

A frequent issue in agent design is merging instructions, examples, data, and history into one large block. This creates unpredictable behavior and makes the system harder to maintain.

A better approach is to separate context into clear layers.

- Instructions set the rules and tone.

- Tools define available functions and input requirements.

- Dynamic state includes data retrieved for the current turn, such as customer records or inventory details.

- Conversation history contains only the relevant messages from the last few turns.

- Examples are included sparingly and used only to clarify specific patterns.

With this structure, each layer serves a single purpose. You can update instructions without affecting tool definitions. You can adjust retrieval logic without rewriting the entire prompt. This keeps the system more predictable and easier to scale.

Keep your latency target in mind

Real-time Voice AI depends on fast turn taking. Any delay between the caller speaking and the agent responding is noticeable. Context design plays a significant role in this timing.

Larger prompts take more time to process. Redundant information slows down the model and increases the chance of errors. Reliable agents focus on only the information needed for the next decision.

Some practical guidelines:

- Keep history short

- Limit examples to essential cases

- Use retrieval to bring in relevant data

- Remove verbose or repeated content

Lean context improves performance and helps maintain a natural conversation flow during busy periods or high concurrency.

Remove ambiguity wherever possible

Models perform best when instructions are clear and direct. Ambiguity often leads to drift. It also increases the chance that the agent will produce inconsistent or overly creative responses.

You can reduce ambiguity with straightforward rules:

- Ask for missing information

- Confirm unclear requests

- Use concise language

- Avoid speculation

- Call tools only when needed

When each guideline is explicit, the model has fewer opportunities to misinterpret intent. This leads to more predictable behavior across long-running calls.

Use structure to guide the model

Structured context helps the model understand expectations. Typed schemas, clean formatting, and clear output rules reduce misinterpretation.

Helpful forms of structure include:

- Tool signatures with defined parameters

- Bullet lists for constraints

- JSON schemas for expected outputs

- Simple and predictable data formats

These patterns give the model consistent anchors. As a result, the agent produces more stable and reliable responses, especially when handling tasks that require precision.

Building voice AI agents you can trust at scale

Reliability improves when context engineering becomes a standard practice. Agents are easier to troubleshoot. Behavior is more predictable. Teams can iterate faster.

This approach also supports better evaluations. By structuring context clearly, you can test agents more systematically and identify problems earlier. It becomes easier to trace issues and compare results across versions.

A well-designed context is part of your system, not an afterthought. It shapes how your agent handles real-world calls and gives you the control needed to maintain quality over time.

Share on Social